The second summer: Going multithreaded!

Introduction

In the first summer, I looked at performance optimisations and how they affect run-time optimisations. Please see https://laidlawscholars.network/users/268073-leaderboard/posts/50926-compiling-and-optimising-effects-of-different-flags-on-performance-of-c-applications for more details on what I did that summer. Especially do read Report 5 and the video associated with it.

Going forward

With a good chunk done in the first summer, there were a couple of paths I could take:

- Take a look at other languages. The first summer focused on a compiled language - C++. What happens if we try with Java for instance? Or Python (which is fully interpreted)? While analysing performance in JIT-based languages is interesting (especially with Java's JIT making surprisingly powerful optimisations on the fly that can equal C++ in some cases), it's a bit of a pain to find out what exactly they are doing (given that analysing their assembly is awkward when optimisations are possible on the fly).

- Go multithreaded! That is, analyse performance when we try to run more than one thread per process. The thought of multithreading by itself immediately brings interesting variables that must then be considered. For instance, the operating system being used. For a single thread it is easy (because all it needs to do is give a core for that process!), But when you add multiple threads it becomes far more complex. For instance, if a thread is sleeping, how should the scheduler react? How should it react if a thread is running for too long and other threads need that CPU power? There are a lot of variables in play.

In the end, after discussion with my supervisor, I picked the second option.

Impact of the virus

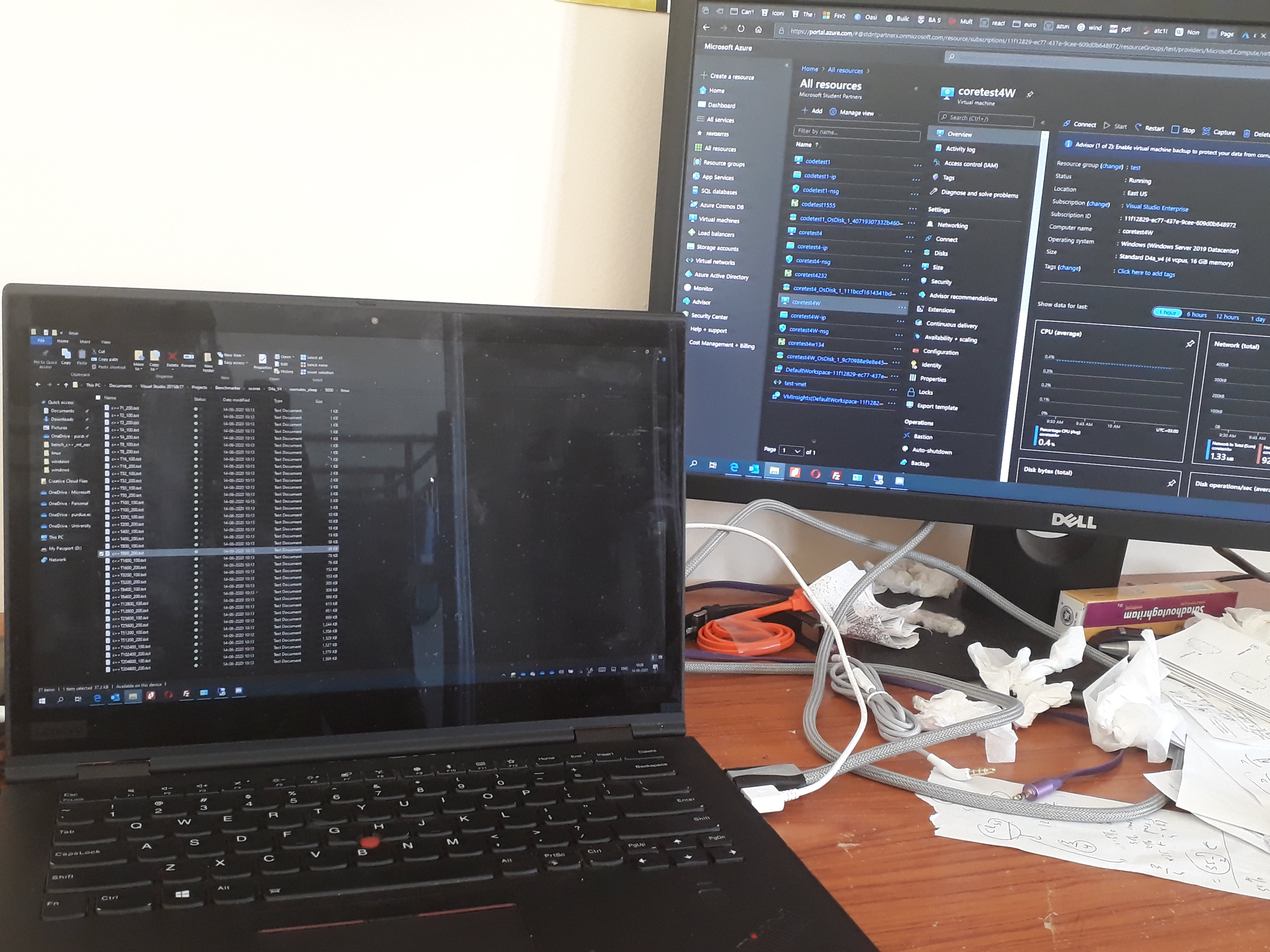

I was supposed to do all my work at St Andrews this summer, but the coronavirus meant that I would do it at home. This did not affect my plans badly though. All I needed is a table, an external monitor, and a chair.

I would usually work on the lab computers at St Andrews. For this summer, I decided to use Microsoft Azure virtual machines, mainly because Microsoft graciously gives me $150 of credit every month and also because I have full control of the machine and can ensure that no other process that someone else may run on that machine could disrupt my work (which is very important when going multithreaded).

Overall not too bad, compared with the disruption some other scholars had to face for this summer.

A little background

The main operating systems I decided to run my program were on Linux (Ubuntu Server) and Windows. Mainly because Azure mainly supports these two OSs, but also because they use fundamentally different scheduler designs.

Linux since the 2.6.21 kernel uses a completely fair scheduler kernel (CFS). It basically tries to keep everything fair, and uses self-balancing red-black trees to keep track of the processes. This is O(log n), technically log2 n which is actually pretty high. For instance, when n = 100, log2 n > 5, and that's huge. In theory only, because as we'll see, Linux handles this very well in practice.

Windows (since the NT era at least) uses a multi-level feedback queue. There are k process priorities possible, and for each of the k priorities is a double-ended queue. All that is required is enqueuing (adding to the end) and dequeuing (removing from the front), both of which are constant-time. Hence Windows' scheduler is O(1), as k is a constant.

From the above description, it looks like Windows will easily knock Linux out of the park. But it's not as easy as that. For instance, Linux used to have a O(1) scheduler at a point. Why would they forego O(1) for O(log n)? And it's possible that Windows' O(1) scheduler has a high constant factor that could nullify any possible benefits.

Scripting

As with last summer, I had to run my program with various parameters and collect the results reported. This time, I decided to use scripts rather than writing custom programs last semester, because that's what everyone does! With a virtual machine, it was easy for me to check whether it had completed running - as I only needed to go to the Azure portal.

Preliminary results

In the most simplified case of running n threads at once and running the full benchmark load, well Windows and Linux both are neck to neck. Even with n = 6400 the difference is within the margin of error.

To reduce the effect of context switching (which is known to be effective), I grouped the n workloads into (n/k) groups, where k is the number of threads supported by the VM (here it's 4). The idea was to give each thread the time it needs without risking being context-switched. Here too the results were neck-to-neck. Even modifying the benchmarks to eliminate mutexes (by reporting only time taken) did not make much difference.

I decided to go further. To start with, notice that even where n is large, in reality it's much lesser than that, as many of the threads would have died very quickly while the other threads will keep running for hours, hence any potential difference is masked. So to reduce the effect of the workload itself, I sharply decreased the time taken for each thread to run and raised the number of threads in return. However, my Linux script could not run properly, most likely due to one of Linux's crappy ulimits - software-imposed limits on things like virtual memory etc. No matter how much I increased the limit, I would get out of resource errors (and I checked the VM portal to make sure it wasn't memory running out). On the flip side, Windows does not have arbitrary limits and my script ran successfully.

The next step was to shift from a single process - N thread analysis to running N processes in a single thread. The work done is the same - rather instead of threads, we are individually creating N processes. Here though we can see a significant overhead - at least 3 - 5% to over 18% for large number of threads when the workload is miniscule. This shows that the penalty for creating a process compared to that of a thread is quite a bit higher. However, if a workload takes enough time, then the process overhead isn't as bad as it may seem. To explain this in a real-life, consider a web browser. Modern browsers are sandboxed, which means that each tab runs in its own thread, or process. If each tab gets its own process, then if you close the browser and reload it, the browser has to create every process again, which is slower (though modern browsers mask this in many ways, like delaying the refresh of tabs).

Going further

These are the plans I have right now, and are subject to change based on the results:

- Testing with I/O bound or a mix of them - till date all analysis has been purely CPU-bound - neither the threads nor the CPU is ever idle - when a thread is done, they die. Introducing I/O bound cases into the mix (formally when they don't use their timeslice) adds a lot more complexity - while it's easy to make a process "seem" I/O bound (an example is to just make the thread sleep), analysing, and configuring the parameters, is trickier.

- Start hacking - looking deeper into what makes the CFS scheduler what it is by analysing the source code, and also potentially looking at other O(1) schedulers if they are available (looking at FreeBSD's ULE), but this also depends on Azure supporting them (which makes hacking tricky as I'll need to commit the changes to the live VM, which is tricky in a VM).

- Maybe if time permits, investigate the effects of NUMA nodes on multithreaded performance. This issue (with Windows 10 Pro) had cropped up in the media some time ago, as Windows 10 Pro was faking NUMA nodes on consumer processors with high thread counts. Even if the actual processor did not have NUMA nodes in reality, the performance loss was quite measurable compared to Enterprise, which did not do this.

As usual, hope you enjoy this short blog post, and do let me know if you have any questions.

The image shows my current workspace. Not particularly neat, but has what I need.

Please sign in

If you are a registered user on Laidlaw Scholars Network, please sign in